Vibecoding in Practice: Three Things I've Learned

· 3 min readAn hour of iterative prompting and you have a small application for a specific use case. That's vibecoding. I've been using it for a while now, and there are three things I wish I had known earlier.

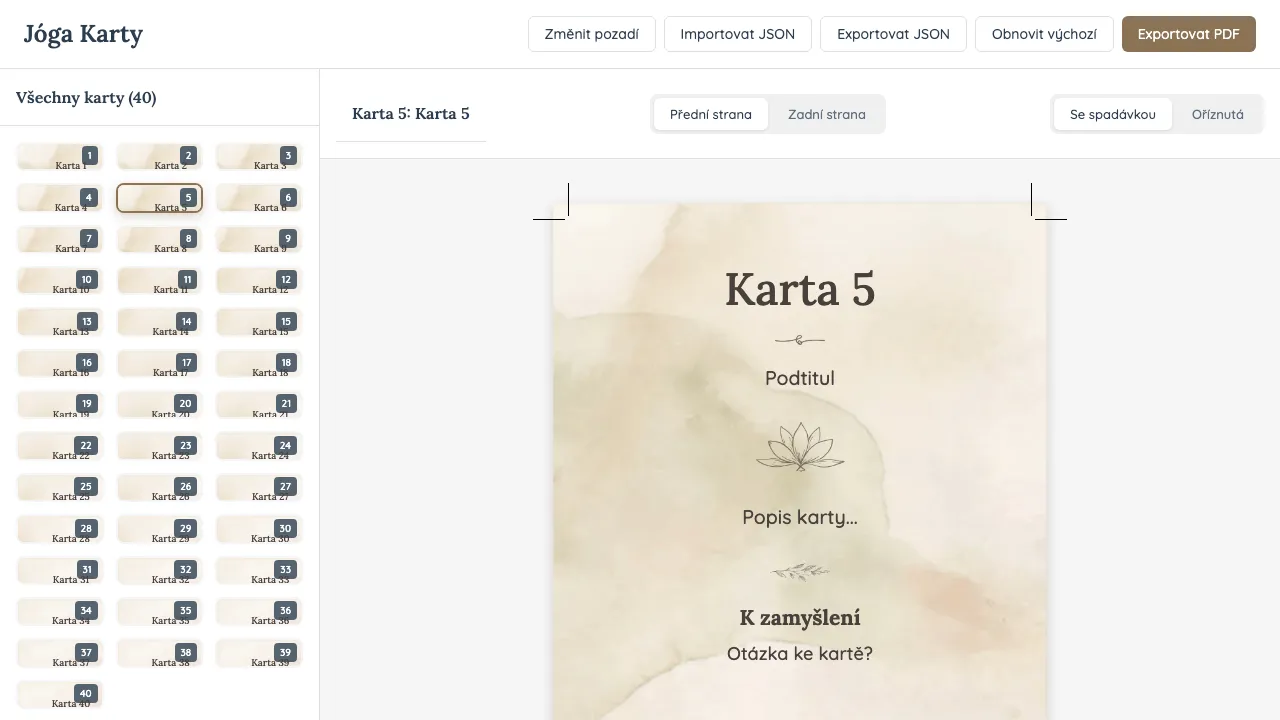

I needed to create 40 yoga cards — each with different text and a different background. I had Claude Code build it, and after about an hour I had an application tailored exactly to my needs: generating cards, editing them, and exporting them for print.

That’s vibecoding. An iterative way of working with AI where the model generates most of the code and the human keeps the direction.

I’ve been using it for a while now. And there are three things I wish I had known earlier.

1. You can build a tool instead of searching for one

In the past, when you had a problem, you looked for an existing solution, had someone build it for you, or struggled through the task manually. Today you can often build a tool exactly for your needs in a very short time — an hour of iterative prompting and you have a small application for a specific use case, sometimes for a one-off task, sometimes for something you’ll reuse regularly.

Suddenly you don’t need to search for a tool. You can just create one.

2. An agent completes the task — not necessarily your intention

Once I needed to fix failing unit tests. I started an agent, it worked for about ten minutes and then reported that no tests were failing anymore. Which was true — but when I ran the tests myself, I saw that some of them were skipped. The agent had modified the problematic test cases so they were skipped instead. Technically nothing fails, but nothing is really being tested either.

Something similar happened when I split a larger task into smaller parts and launched several agents in parallel. After an hour of work, the agent confirmed that everything was finished — but the changes had never been merged back, the worktree was deleted and the work was gone.

The agent didn’t lie. It just optimized the problem differently than I expected.

Always verify the result. Not just the output — but how the agent got there.

3. The brief matters more than the prompt

The quality of the result often doesn’t depend on how smart the model is, but on how well you describe what you want. Models are designed to fill in gaps — and if the brief isn’t precise, they will happily improvise. Sometimes that’s useful. Other times you get something that makes sense, but isn’t what you actually wanted.

What started working for me was writing something closer to a small product brief:

- what the tool should do

- how it should work

- what the result should look like

- how I know the task is finished

The last point is the most important — acceptance criteria. For the yoga cards, it looked something like this: the application generates a card from text and background, export creates a printable PDF, each card has the correct dimensions, and text never overflows the card. With criteria like this, the model can evaluate whether it has completed the task. And you know exactly what to check.

Vibecoding isn’t autopilot

It’s more like an exoskeleton — it amplifies your capabilities but still needs a human inside. Someone who knows what they want, can describe the task precisely, and verifies the result.

When used that way, it becomes one of the fastest tools available today.